This is an old post. It may contain broken links and outdated information.

My name is Lee, and I am a Mac user.

There, I said it. I’m a dirty, dirty Mac user, and I’m okay with that.

My intent with this blog was for it to remain purely technical, with no personal entries at all; I’ve been down that road before with my last blog and it didn’t end well. However, an article went up this past weekend on Ars where the staff posted pictures of their office desks, and the amount of herpderping in the article’s comments about the mostly-Mac setups was boggling. Maybe it struck me so hard because I consciously avoid platform flame war discussions, having taken part in more than my share in the 80s and 90s; whatever the reason, some of the shit bubbling up to the surface in that article’s discussion thread just blows my mind.

The computing platform you start with might say something about your economic status (can’t afford a Mac, gotta use a hand-me-down Packard Bell!) or your computing ability (“I’ve got a Dell!” “What model?” “….Dell!”), and the computing platform you choose might say something about your goals and preferences (“GONNA DRIVE THE FRAG TRAIN TO TEAMKILL TOWN WITH MY FIFTEEN GRAPHICS CARDS!”), but judging someone’s intelligence and worth as a human being based on whether they’re using a home-built Windows 7 PC or a Mac is ludicrous. I’ve built more PCs from parts in the past quarter-century than any hate-spewing MACS SUCK noob on any discussion board you’d care to name, and yet the computers I use most often throughout any given day have an Apple logo on them.

Things weren’t always thus.

Story time!

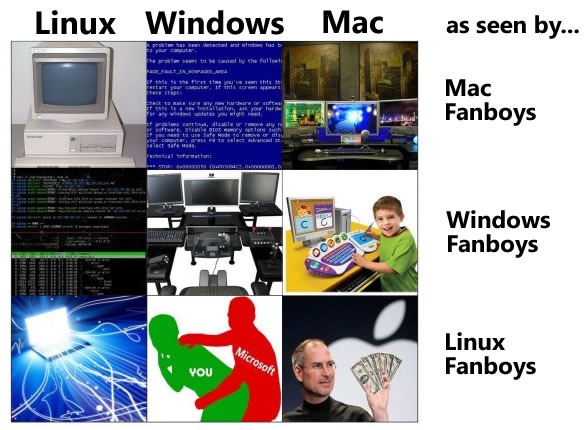

In the darker days of my youth, I frequented the scary devil monastery (Google Groups link for the youngsters and newbs who don’t know what USENET is), and from there I learned one of the two primary axioms of using a computer: “All software sucks, all hardware sucks.” It’s true—every platform has its foibles that must be worked around. I wish I had an attribution for the image below, but I couldn’t find its original source; its point is no less valid. We overlook our choice system’s flaws, but they’re there and to others, they are laughably huge.

When I was a newly-minted nerd, round abouts 1985 or 1986, my dad brought home an IBM PC XT on loan from work for him to ostensibly be able to do some stuff in the evenings and on weekends as needed. It was one of the dual-floppy models with no hard disk drive, and it had CGA graphics. I was instantly smitten. Work supplied new computers, including an IBM PC AT with EGA graphics a few years later, and a string of games paraded their way across my screen and through my childhood—Oo-Topos, and Transylvania, and Starflight, and Sentinel Worlds, and Space Quest, and so many others.

I grew up PC-centric, playing PC games. BBSs came in my tweenhood, when newly-armed with a shiny external Hayes Smartmodem 2400, I dialed into my first Commodore-powered BBS. I was young and dumb and using whatever default comm program shipped with the Hayes modem (probably BitCom, though that was a very long time ago), which didn’t even support ANSI graphics, and surrounded by all these folks using Commodore 64s and crowing about how much better their BBS experience was than mine. At 12, I had discovered flame wars.

Apple Macintoshes were a thing back then, too—a thing used by babies and liberal arts majors, my teenage self grew to realize. I got my daily dose of Macs in junior high journalism class, LCs and LC IIs and SE-30s, complete with their garbage operating system and their stupid mice. The flame wars were epic, and I was on the front lines, spitting and foaming, because I felt emotionally invested in my PC-centric background. The IBM-compatible world I lived in gave me joy and was obviously awesome, and here were these others saying that their inferior experience was better? Preposterous. I had to set them straight, which surely was simply a matter of finding the right combination of facts and invective!

Not so much.

Skillz with a Z

I grew up, the Internet became a thing, and I parlayed an affinity for building and troubleshooting Wintel PCs into my first job out of college, and from there into a career. I didn’t think anything bad about spending all day fixing PCs at work and then coming home to tinker with my own rig (PROTIP: one’s cobbled-together home-built computer is always referred to as a “rig”, as if it were some kind of high-speed cyberpunk hacking machine). I was swapping video cards before 3D acceleration was a thing. I was elite back when we used to spell it with all the letters intact, back when you were far more likely to call something cool “k-rAd” because “elite” was almost exclusively reserved to refer to those who trafficked in pirated software on fast BBSs into which normals weren’t allowed to dial.

As jobs came and went and I got into my late 20s, a funny thing happened: I got more skilled, and I got more tired.

I was swapping video cards before 3D acceleration was a thing.

Before I was 30, I was a system administrator working on what was at the time the second-largest Active Directory deployment in the entire world (IBM’s was bigger than ours). I had left behind support and moved into administration, and I helped solve Windows problems at massive scales. Work was sometimes frustrating but more fun than not, and I learned a lot. My computers at home became better-managed thanks to tricks I learned at work—more secure, with a better network beneath them, with managed updates and all kinds of neat things borrowed from the enterprise. Still, as time wore on and the job got more complex, I had fewer hours in the day at home. The idea of swapping out a motherboard/CPU/HSF for a new one and performing all the gruntwork necessary to make Windows continue functioning after that kind of a switch no longer seemed like a great way to spend an afternoon—it started to sound tiresome.

Two things happened at roughly the same time in late 2007: I started using Linux at work, and I started using Windows Vista at home.

The vista from Vista

I get that Vista was a huge technical leap over Windows XP, and for the most part I liked the operating system. What I didn’t like was how ridiculously difficult it was to make it work correctly with two Nvidia video cards in SLI. At the time, I was rocking a pair of GeForce 7900GT cards, and Nvidia’s initial attempts at a functioning SLI mode that worked well with Vista’s gewgaws was…not good. There were crashes, but worse, performance was in the toilet just while puttering around the UI. I could disable SLI and have it only come on when gaming, but that entailed lots of extra cofiguration work to make it happen. And I didn’t want to go back to Windows XP because, like most nerds, I had preached the importance of backups without actually having any myself.

There were crashes, but worse, performance was in the toilet

At the same time, my role at work had shifted from sysadmin to storage architect, and I began spending huge chunks of my day logged into an EMC Celerra NAS device, which used a pair of redundant 1U pizza box servers running Linux as management stations. It was my first encounter with a shell that wasn’t DOS or a DOS-descendant, and though my first steps were halting (including, embarassingly, alias dir='ls -al', the sure sign of a Windows sysadmin out of his depth), as the weeks and months passed I grew to depend on being able to quickly bang out bash scripts to automate things.

I wanted that at home—I wanted that customizability and control, wanted the ability to be able to quickly bang out a do-while loop to repeat a command a bunch of times without having to make a batch file. I wanted that, but I was too terrified to actually make the switch to Linux. After all, if I wanted to screw around and tinker with half-broken drivers and applications all day, I could just keep Vista. I’d heard the exaggerated horror stories of having to “compile” ones own drivers, or of even casual users having to be expert programmers and debuggers to even get systems to boot. So, I performed an experiment: I convinced my parents to dump their spyware-riddled Dell with XP Home and switch to an iMac.

Here, have some Kool-Aid

Their transition was spread across two weekends of hard work, but after I got them settled, they quickly took to the new system. Gmail and the web functioned much the same, which was 90% of what they needed. More, spending that much keyboard time in front of OS X brought home to me that here was a system with the Linux-like attributes on which I’d grown to depend, coupled with a slick GUI that actually worked, along with an ecosystem of software that contained good apps that I wouldn’t have to code (or, more accurately, fail at coding) myself.

So, in November 2007, just after Thanksgiving, I ditched my overclocked Athlon FX-60 with SLI for a brand new 24″ aluminum iMac, running the just-released OS X 10.5 “Leopard” operating system.

Rough parity

I’ve had a few Macs since then, and switching is a decision I’ve never regretted. These days I don’t game nearly as much as I used to, so I see Windows 7 once every few weeks or months when I reboot into Windows to play something that doesn’t have a Mac version. I don’t script as often as I used to, but fiddling with Linux on servers (described everywhere else in this blog) is certainly easier from an OS X terminal window than it would be in PuTTY. I’ve even started using the fishfish shell in place of bash—and the day you switch from the default shell to one that you picked yourself is kind of a big day in your nerd life 🙂

At this point I stick with OS X because I like it. I prefer it.

I stick with OS X on Apple hardware because it’s easy, and because I am used to the OS X workflow for common tasks. I stick with it because applications are easily installed and uninstalled (dragging a single icon to the trash still blows my mind, after having fought many times with the remnants of broken & un-uninstallable Windows applications). But, above all else, at this point I stick with OS X because I like it. I prefer it. The computers are more expensive than equivalent PC hardware (though that’s debatable and trying to determine exactly how much more expensive gets into complex value proposition definitions and a lot of math), but my wife & I have been blessed with jobs that pay well, and I’m at a point in my life where I can pretty much buy whatever the fuck I want, whenever I want. I’m not too concerned that computer #1 costs a few hundred bucks more than computer #2.

What irks me, though, are posts like this:

I also find comments such as “OS X stays out of my way” and “lets me get my work done” to be incredibly weak….You then go on to basically say “been there done that, doesn’t appeal to me now that I’m older”, as if building custom machines, tinkering and upgrading is the pastime of children who don’t have any responsibilities or aren’t doing real work on them. Really?!?

…If you want to take offence to someone saying that Ars employees don’t have any special insight into what computer to buy, you could do a lot more to justify your choice with actual technical information (specs, software, value) instead of resorting to the same old vague claims that amount to nothing but personal preference.

The obvious fallacy the poster makes is the same one that lots of geeks make—that “specs” tell the whole story of a device’s capability and desirability. Worse, though, is the implication that a technology writer who prefers a platform for non-benchmarkable reasons isn’t qualified to dispense technology advice—that the choice of computing platform must be arrived at like a mathematical proof, or the technologist is incapable of being trusted for anything.

I use OS X because I like it. I don’t like Windows 7 nearly as much, and when I do use it I miss the terminal. While major apps (like Photoshop, for example) are largely the same between platforms, I find the niche apps I’ve collected on OS X to make my life easier are simply better—better made, prettier, easier to use, and smoother overall—than the collection of niche apps I’d amassed after 10+ years on Windows 95 through Windows 7. Compare, for example, my graphical SCP application of choice on OS X to the shit Windows users have to put up with. Does WinSCP work? Of course—quite well, and quite quickly. I used it every day for years. But Flow certainly works better, in my opinion, and that opinion is why I’m on this platform.

Get off my damn lawn

Maybe it comes down to just not caring anymore about getting my hands dirty. This blog and all its posts about tweaking configuration files may undermine that, but while I do no small amount of screwing around with servers, I very much enjoy a non-tweakable desktop experience. I like trying new applications as much as the next guy, but I sure as hell don’t miss having to update my video card drivers every month & re-do three different registry hacks to make my taskbar span multiple monitors, or installing bloated printer driver application packs, or having to manually edit my mouse’s XML files to make all the buttons correctly reprogrammable (fuck you on that one, Logitech). I sure as hell don’t miss product activation, Windows Genuine Advantage, and having my operating system treat me like a thief and a liar.

I am technical enough to thrive on any operating system. I am not a newb, a poseur, or an idiot. I choose OS X, but I don’t begrudge you if you choose something else. Doing so would make me an idiot.